My new novel, Colossus, arrives at the end of April. Dana Spiotta, National Book Award finalist, had this to say about it: “The slick, rich, right-wing pastor Teddy Starr is a charismatic confidence man in the American vein (part Elmer Gantry, part Jay Gatsby, part Donald Trump). As fast talking as he is, as amoral as he is, Barkan gives him a fascinating, complex inner life. This thrilling novel skewers the cynicism of our current moment, but it also strikingly renders the human drama of fathers and sons, the tension between legacy and possibility.” Sounds good? Order it now.

When did reality start to outstrip fiction for good? Philip Roth believed it was all happening around 1961. “The American writer in the middle of the 20th century has his hands full in trying to understand, and then describe, and then make credible much of the American reality,” Roth wrote in Commentary, when he was not yet thirty years old. “It stupefies, it sickens, it infuriates, and finally it is even a kind of embarrassment to one’s own meager imagination.” Gazing back more than sixty years, we can find some of this lament quaint. Roth cited Charles Van Doren, who cheated on the quiz show Twenty-One, as one of his imagination-shattering tribunes, along with Dwight Eisenhower, embodiment of the staid postwar consensus. (Roth was prescient, at least, about tossing Roy Cohn into the mix. Perhaps no single man is more responsible for the contemporary nightmare than Cohn, who was merely, in Roth’s era, the ravenous McCarthy bulldog.) But the sentiment holds. Each successive decade, it seems, has driven the practice of writing fiction further to the margins of American life. The novel is far from dead, and will probably not perish until human civilization goes with it, but each passing years offer a new assault. What might be most alienating, as a novelist working in the 2020s, is the apparent need to justify what it is you do. Some understand it—most, maybe—but there’s a segment of the populace, young and old alike, who will always comprehend nonfiction far more. Easy enough to declare you produce essays or journalism—you’re in the “reality” business—and harder, in conversation, to explain that you are a fabulist for the sake of art. Why? And if they might be, in theory, an appreciator of the novel, they come in for a Rothian lament. Isn’t life weird enough today? Why bother imagining? It’s not like any novelist could dream up Donald Trump. Even our wonderful twentieth century auteurs—in whatever medium they might have practiced—failed to anticipate a terrorist attack on the scale of September 11th, or at least such spectacular and strange violence. There are no fictional airplanes flying into the Twin Towers before 2001. Any portrayal of future New York City in film or fiction before that day inevitably preserves the towers. They were supposed to stand a thousand years.

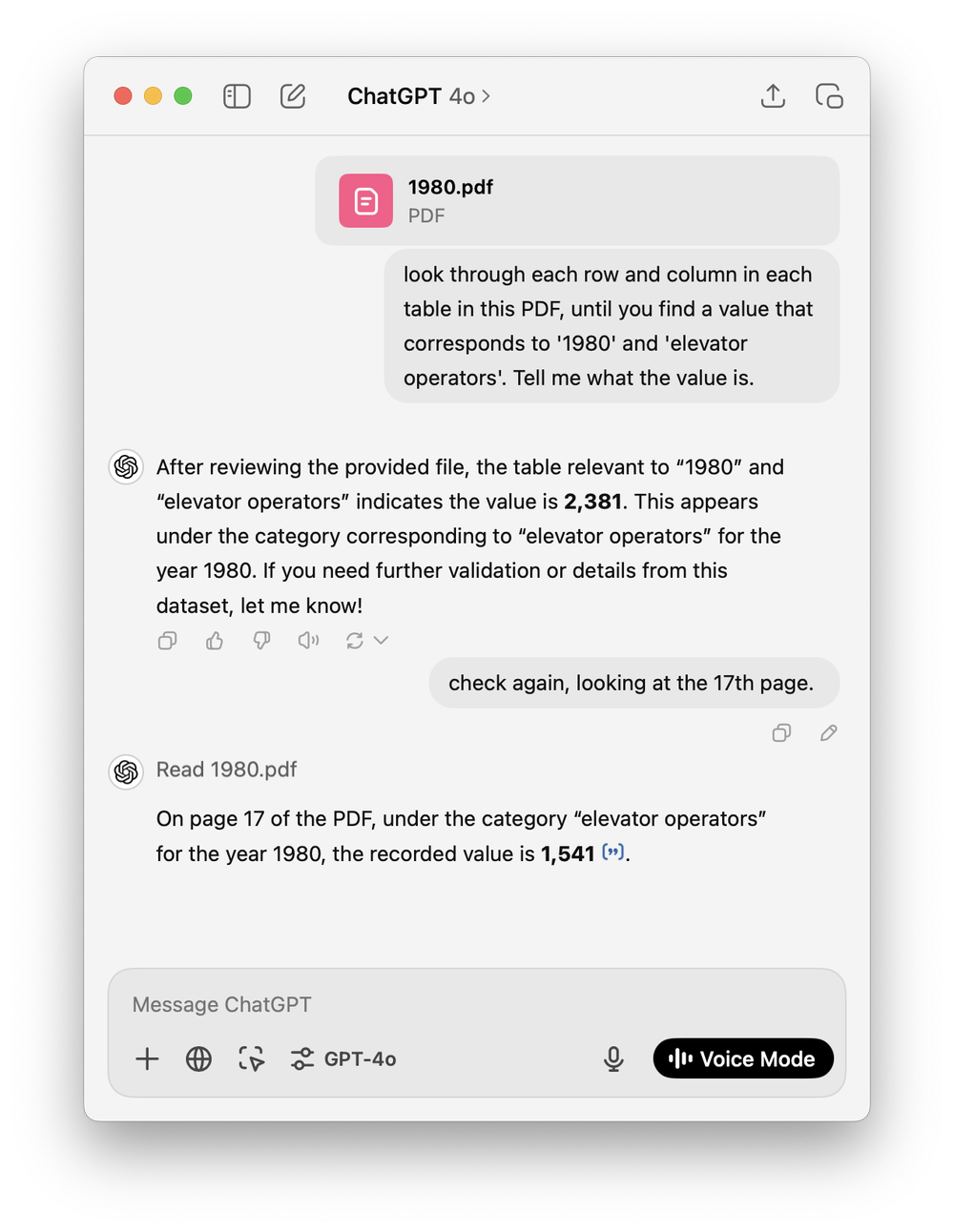

Yet the writers persist. The novels keep appearing. Roth’s Commentary essay preceded almost his entire literary career. “As a literary creation, as some novelist’s image of a certain kind of human being, he might have seemed believable, but I myself found that on the TV screen, as a real public image, a political fact, my mind balked at taking him in,” Roth wrote of Richard Nixon, who wouldn’t become president for another seven years. How we balk at the real-life phantasmagoria before us today. It is enough to make any writer of fiction decide it isn’t worth it or, on the balance, it’s better to retreat—better to duck inward, paddle in the soup of autofictional neuroses, and gesture mildly at the madness out the window. What may offend me most about artificial intelligence is not that it can do a job I can—the chess grandmaster doesn’t fret that Deep Blue defeated Kasparov back in 1997—or may, someday, unemploy me, but that it’s so committed to robbing human agency. The promise is that AI can think for you, even dream for you. In a recent essay in Harper’s, Sam Kriss interviewed a pitiful young man named Roy Lee, a would-be AI mogul of some sort, and all he seemed to care about was taking the friction out of life. “I relish challenges where you have fast iteration cycles and you can see the rewards very quickly,” he told Kriss. The man read fiction until he was eight, and then found “classical books and I couldn’t understand, like, the bullshit Huckleberry, whatever fuck bullshit, and it made me bored.” He preferred, Kriss wrote, “online fan fiction about people having sex with Pokémon.” Not everyone can develop a taste for fiction, “classical” or no, but what vexed the young man most was that fiction vexed in the first place. It challenged him, forced him to think, and didn’t disgorge ready answers. AI is especially popular among college students because they’ve realized, to secure passing grades, they can offload reasoning and deduction to a machine. A machine can be a person for them. The work of personhood is, perhaps, too great a struggle—too much of an enigma—to engage with for a lengthy period of time.

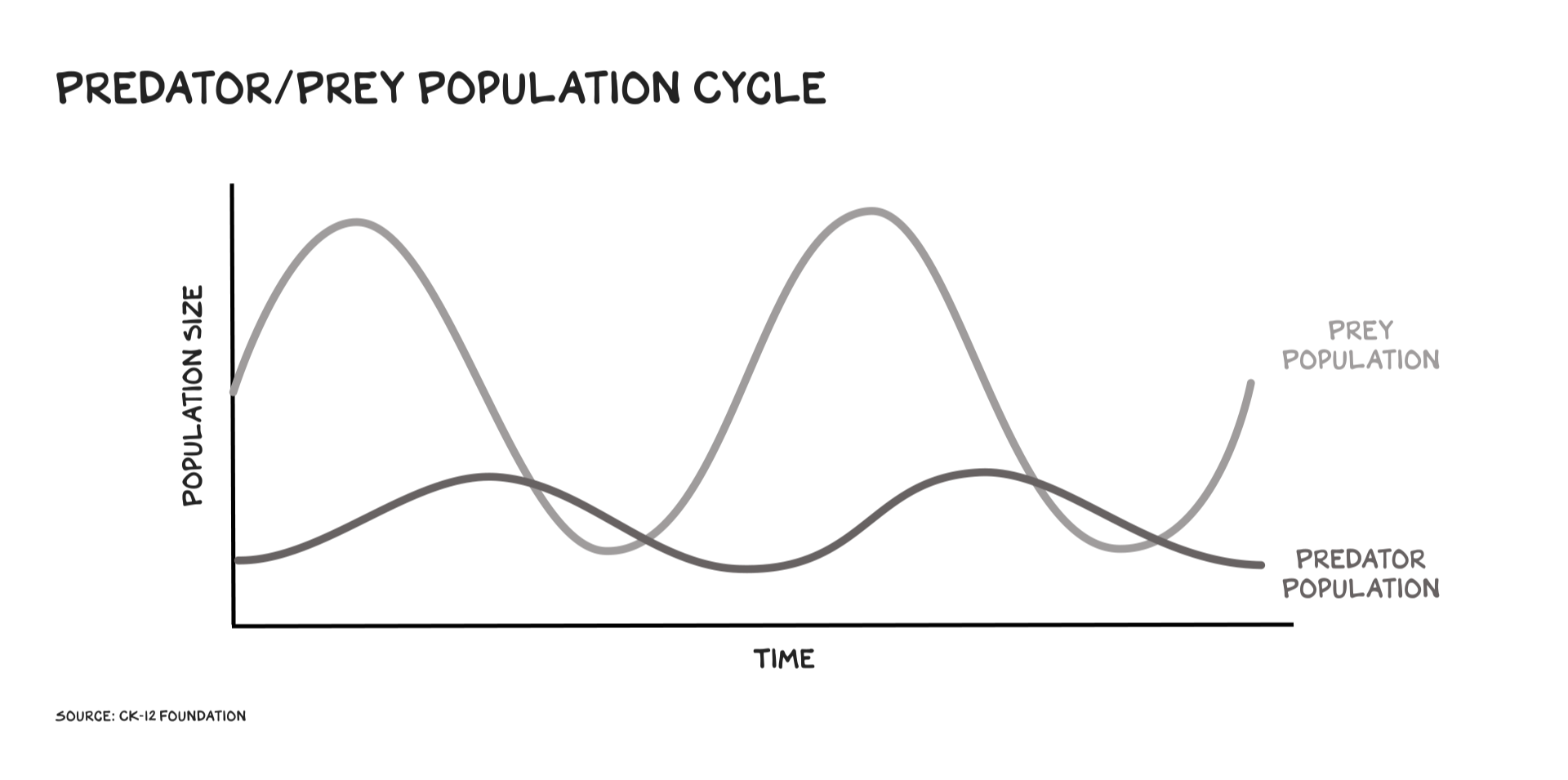

In the age we’ve entered—this machine age, AI age, whatever it might be—the purpose of fiction is no less essential than it was a century ago. In fact, in these post-analog times, it might be what is required most. Not for a moral purpose—not to be a way to make “better” or more “empathetic” people—but for the need to reclaim, fully, personhood. The coming struggle might not be left vs. right or some other searing binary but human vs. anti-human. The anti-humanists are, for now, ascendant. They are interested, theoretically, in human augmentation, a cybernetic transcendence, but the greater purpose seems to be human replacement, with only a select few—a certain billionaire elect—presiding over the mass of machines. “It also takes a lot of energy to train a human,” Sam Altman, the OpenAI founder, said recently. “It takes, like, 20 years of life and all of the food you eat during that time before you get smart. And not only that, it took, like, the very widespread evolution of the hundred billion people that have ever lived and learned not to get eaten by predators and learned how to, like, figure out science and whatever to produce you, and then you took whatever, you know, you took.”

“The fair comparison,” he continued, is “if you ask ChatGPT a question, how much energy does it take once its model is trained to answer that question, versus a human? And probably, AI has already caught up on an energy-efficiency basis, measured that way.”

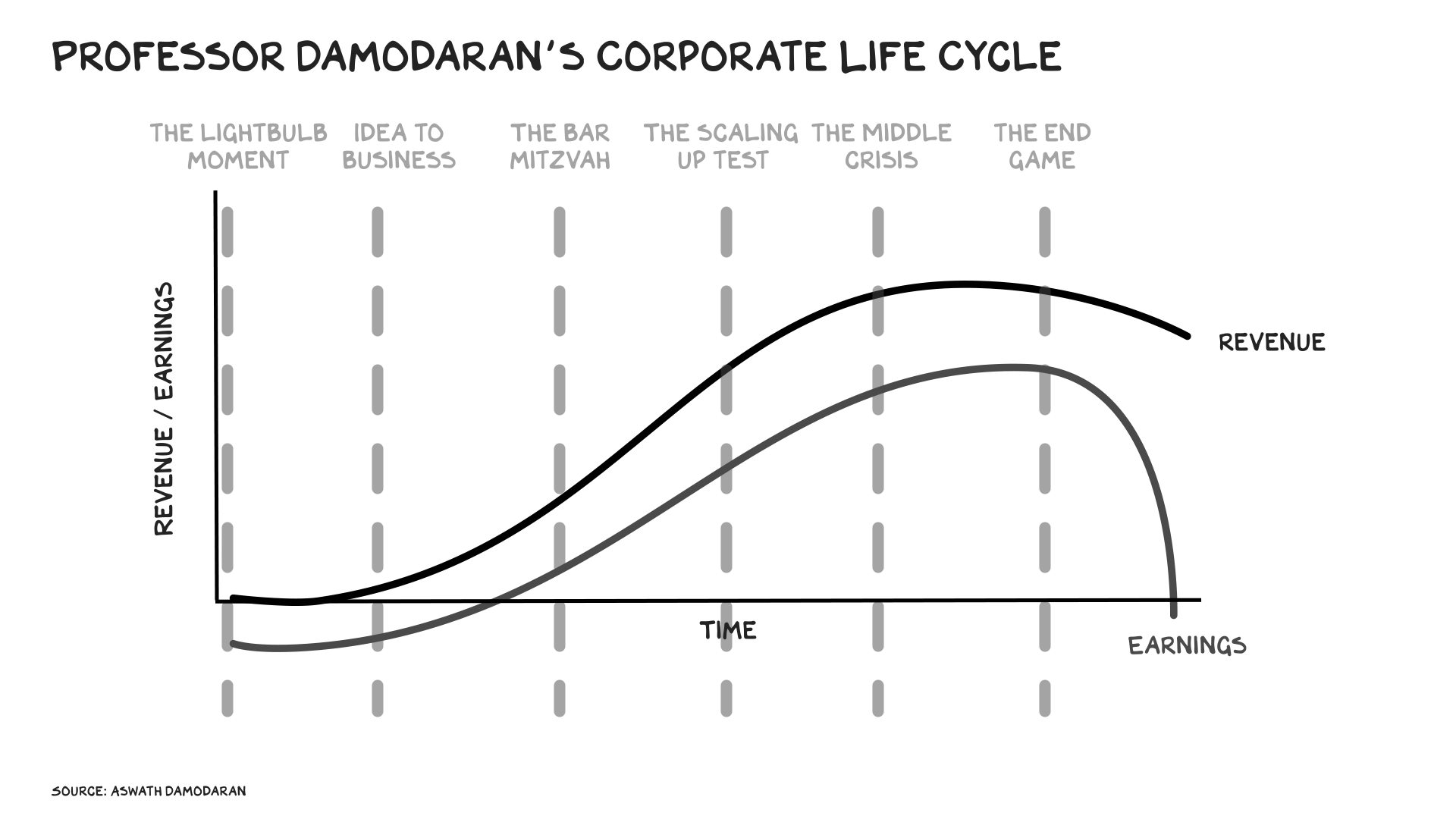

Capitalism will always prize efficiency; efficiency, in isolation, is far from evil. Neither is technology—we do not want to live bereft of electricity, penicillin, or even the computer. Digital entertainments have their purpose, too. What makes this decade different is the desire of this new billionaire class to deny human beings their intellectual and creative essence. It might not happen, but that is the dream. That is what they are yearning towards. Some are more earnest about it than others, or more honest. And the production of novels—the act itself of writing fiction—is alien to these pursuits. What separates a human being from a machine? Consciousness. And what is consciousness? What has the human being been able to do for thousands of years that other animals, largely, cannot? Imagine. The imagination is the greatest gift we have—what’s forged the cathedrals and pyramids, the paintings and poetry, and, yes, even the machines. The automobile and airplane were works of imagination. The novel, in particular, is an imagination art. It flummoxes the Roy Lees of the world, this new rising class, because it is both fundamentally human and asks so much of a human, a reader. The writer of fiction and the reader of fiction are entered, together, into a relationship of the imagination. This relationship can, quite literally, transcend space and time. The writer, long dead, can still commune with the reader through their words, and readers themselves can span the centuries. Both the printed page and the internet can offer their own forms of immortality.

The novel still comes without instructions. As a reader, you might be offered descriptions, but it’s up to you to interpret them—to properly world-build. Your Yoknapatawpha County appears differently in your mind than my Yoknapatawpha County. Cinema can impose far more on the audience. All visual media does this. All of it, to varying degrees, is more passive than fiction, which asks for the fully-fired imagination and the suspension of belief. Journalism is vital for a democracy but most of it is not art—not even close. New Journalism can reach those heights, if there is an inherent danger to that approach because journalism, at its core, demands facts, and facts can run into conflict with art. A fact does not have an aesthetic. The superior aesthetic might be, in fact, untrue. Journalism can be stenography or it can be more interpretive, analytic, and investigative. Still, in those formulations, it does not attempt the higher planes of fiction. Much of nonfiction doesn’t. Literature has the spark of the divine because it is so inherently unexplainable. One can read scores of writing on how to craft a novel or properly consume literature, but there are lacunae inherent to all these explanations; there is a mysticism to the art of fiction that can’t be explicated, what Martin Amis had called the “white magic.” The communing of mind, body, and currents, the flow of image to fingertips, the dream of these creatures in your skull becoming transmuted into a language, maybe English, maybe another, and then this language is the mechanism that produces fresh images for the reader, fresh dreams. And the language, of course, is an aesthetic. Language is never merely utilitarian; language is art, language paints and is the painting. All of it is a miracle.

Fiction, the great imagination art, cannot be defeated as long as humanity exists. Both literally, in the furtherance of modern civilization, and in the current long war against the anti-humanists. The anti-humanists, themselves, have imaginations—AI is its own dream, derived in part from science fiction—but they are repelled by both the indulgences of fiction and its relative unruliness, its inability to offer quantifiable dividends. Why dwell within an author’s world? Why dream if you aren’t making money? Why must a writer dedicate so many hours to a craft that may not be popular or remunerative? The literary novelist, like the ancient monk, toils alone—even in groups, in scenes, the act of writing is solitary—and the only promised reward is the fueling of a spirit, the feeling that, on the level of blood, an important task was performed. As a writer, I, of course, conceive of the reader—anticipate the reader, hope for the reader’s approval—and chase worldly rewards, whatever they may be, but that simply isn’t enough, especially now. You have to want to perform the imagination art. You have to believe in it. You have to love it, or at least like it enough. Even those who suffer through writing do it because of that belief. It must matter. The writer who allows AI to perform the writing for him has lost that belief. He is an apostate. He is claiming religion while having none at all. He is a liar, a liar of the mind and the soul.

The anti-humanists insist AI is conscious. It is conscious now or will be soon. This is like offering a child a toy dog and telling him, repeatedly, the dog is real. Doesn’t it look like a dog? Can’t it bark if you press the button? The simulacra, for the anti-humanists, is always enough because they have experienced a form of spirit-death. Or they are unconsciously hoping, in time, to arrive there, to that stage. It takes a special kind of human—an unusual segment of the species—to long for the obsolescence of their own, to be so against their own. To resent, fully, flesh and blood and brain matter, the stunning complexities of human consciousness and all, in the past millennia, that has been achieved. To make art, humans have never required more than the basics of the machine world: a paintbrush, a chisel, a word-processor. The hierarchy has always been well understood. The machine is the tool of the human being to enhance the experience of being human. Tools are subordinate. Now, AI asks the human to be subordinate to the machine. Or, more accurately, AI asks nothing because it cannot “ask” anything. It is not alive. The anti-humanists make the ask. They’ve grown rich this way, and they’re rotted from within, like Dorian Gray. Except, unlike Dorian, they aren’t even very beautiful on the outside. They cannot entrance or seduce. They are, as a class, froggish and malformed, their mannerisms glitchy. They can’t willingly march us anywhere. They’ll have to do it by force.

I don’t write fiction as an act of rebellion. I do it because I love it and it gives my life meaning, and I believe, through my novels, I can make art and achieve beauty. I can exist in my highest form, as a worshipper might when in prayer. But it is fine, too, to conceive of fiction as rebellion. The more surreal, or hyperreal, our world becomes, the more fiction will need to be the ballast. The more we will need to duck away from the slopstreams, the smartphones, the machines that, like soma pumped into our bloodstreams, steal our agency away. Can it be done? On this score, I tend towards optimism. It is not optimism grounded in the actions the anti-humanists might take. I do not believe in Sam Altman, Roy Lee, or anyone else like them. Their intentions are to make money, unthinkable amounts of it, and they have no second or third order concerns. Rather, my hope resides with everyone else. The human beings who have still, in this decade, not forfeited themselves, not offloaded the act of imagination. Not long ago, there was an AI-generated video of a battle between Tom Cruise and Brad Pitt that looked realistic enough and drove a few commentators to declare that moviemaking as we knew it was over. What more could there be, now that perfect images of celebrities could be created almost instantly, with passable audio? What was left for the human being? It was an infantile conception of art, mistaking, again, the simulacra for the greater purpose, why we strive to paint or sing or write or direct films in the first place. We do not care about a film because a computer has created a representation of Tom Cruise in front of us. We care about Joel in Risky Business, Maverick in Top Gun, and Ethan Hunt in the Mission Impossible series. Brad Pitt is not AI IP; he’s Tyler Durden, Aldo Raine, and Cliff Booth. Both men look like they look, but that’s beside the point. AI enthusiasts wouldn’t understand this—not really—because they don’t grasp the vitality of the human narrative. An actor tells a story on a screen. A machine can write a story and a machine can generate actors in the same way a machine can play chess. A chess fan isn’t less appreciative of Magnus Carlsen because a machine can perform his role. Chess retains its human dimension. Art will, too.

Humans are a story-telling species. Animals have consciousness, animals can feel pain, and the smart animals can communicate in the proximate way people can, but animals do not tell stories. Animal do not conceive art. It is art, and the quest for narrative, that separates the human from all else; for many thousands of years, this was a cause for celebration. Now the anti-humanists hope to stamp it out—slowly, then quickly. The machine will draw, the machine will act, the machine will write. The machine will perform an imitation of imagination, a weak echo, and its creators will hope the human audience will not care either way. That is the darkest outcome: not a world where, Matrix-like, artificial intelligence rises up, enslaves us, and saps our bioenergy to power their own dystopia. The actual outcome, if Altman and his ilk have their way, will be far more banal. Instead of cyborgs, we will have slopborgs, diminished, slothful human beings who have offered themselves up to AI so completely they let machines think and dream for them. Their critical and cultural sensibilities wither away. There is no audience, anymore, for any sort of art. Instead of the Matrix pods, humans will merely stay home, rotting in the digital abyss.

We aren’t there yet. People still do read, make music, watch films, and visit art museums. There is a culture, high and middle and low, even if it’s under attack. There’s an awareness, too, of the cultural and spiritual sickness of anti-humans. The AI revolution is not very popular. None of its progenitors are celebrated in a way Steve Jobs might have been, when Americans still had great faith in their tech innovators. Writers endure and readers endure. Print book sales are not in decline. Neither is live music. The imagination has an audience and a market. The question will be whether, in the next half century, it can keep both. We have to believe it will. That belief will come with friction; the stakes will grow ever higher. Much is on the line for the AI oligarchs. If enough of us do not take to their creations and make them economically viable, they will be out many billions, maybe begging for federal bailouts. They’ll battle to avoid that outcome as much as they possibly can. This next decade will be pivotal, for both the anti-humanists asserting their market position and the humanists trying to lay claim to what is sacred—and what has driven the progress of human civilization for thousands of years. We will have to preserve our right to imagine.

Doctor No

Doctor No